Emic Automata & Thematic Automata

Emic Automata and Thematic Automata are a pair of computer-generated novels, originally produced for NaNoGenMo 2017 based on the idea of using elementary cellular automata as a generative method for producing writing.

Concept

Cellular automata are usually modelled as binary states in a list (1D) or grid (2D) of cells. At every point in the evolution of an automaton, each cell will be in either an on or off state. Straight away, this suggests that the key qualities of a generator will be repetition and contrast.

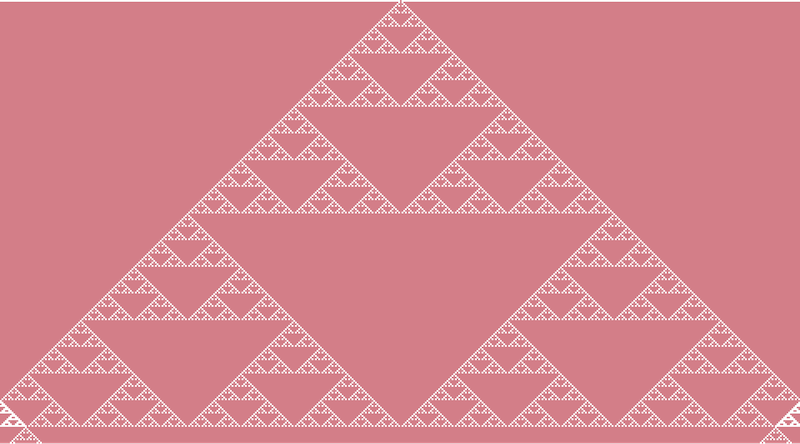

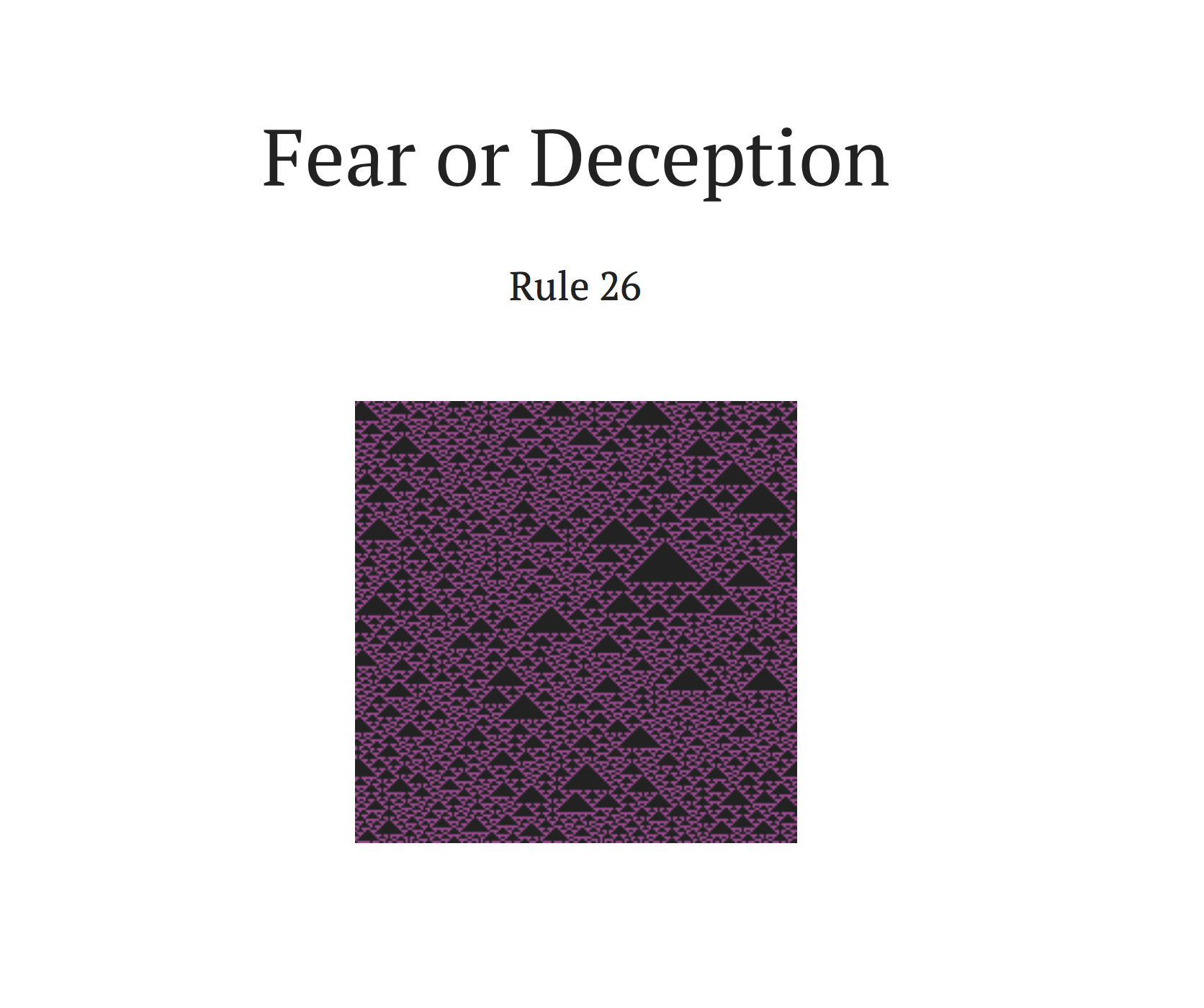

Usually, the on/off state of a 1D automaton is represented visually by colouring pixels or grid cells. After my PROCJAM project, I started wondering what was possible with other more abstract representations. This question reminded me of the odd structural idea behind Infinite Jest as a novel shaped around Sierpinski triangles. So what would a novel shaped around cellular automata look like?

A cellular automaton reproducing Sierpinski triangle-like structures. Rather than fractally subdividing triangles, the automaton moves cell by cell, line by line and produces an almost identical pattern.

One dimensional writing machines

Over multiple generations, the linear sequences of cells in an elementary cellular automaton closely reflect the basic visual structure of a book which is usually made up of linear sequences of characters, words, lines paragraphs and pages.

Turning a 1D automaton into a writing machine means deciding what each cell state is going to represent in the text output. This is where the two books diverged.

In my implementation for NaNoGenMo, automata start from a width (number of cells in a generation), a height (number of generations to evolve for), a randomly shuffled first-generation and a binary rule code from the numbering system popularised by Wolfram.

Because many of the 256 rules in the Wolfram code converge to an uniform stable state, I decided to cherry-pick a specific subset of the more interesting rules and randomly select one for each run of the generator.

Fixed width lines

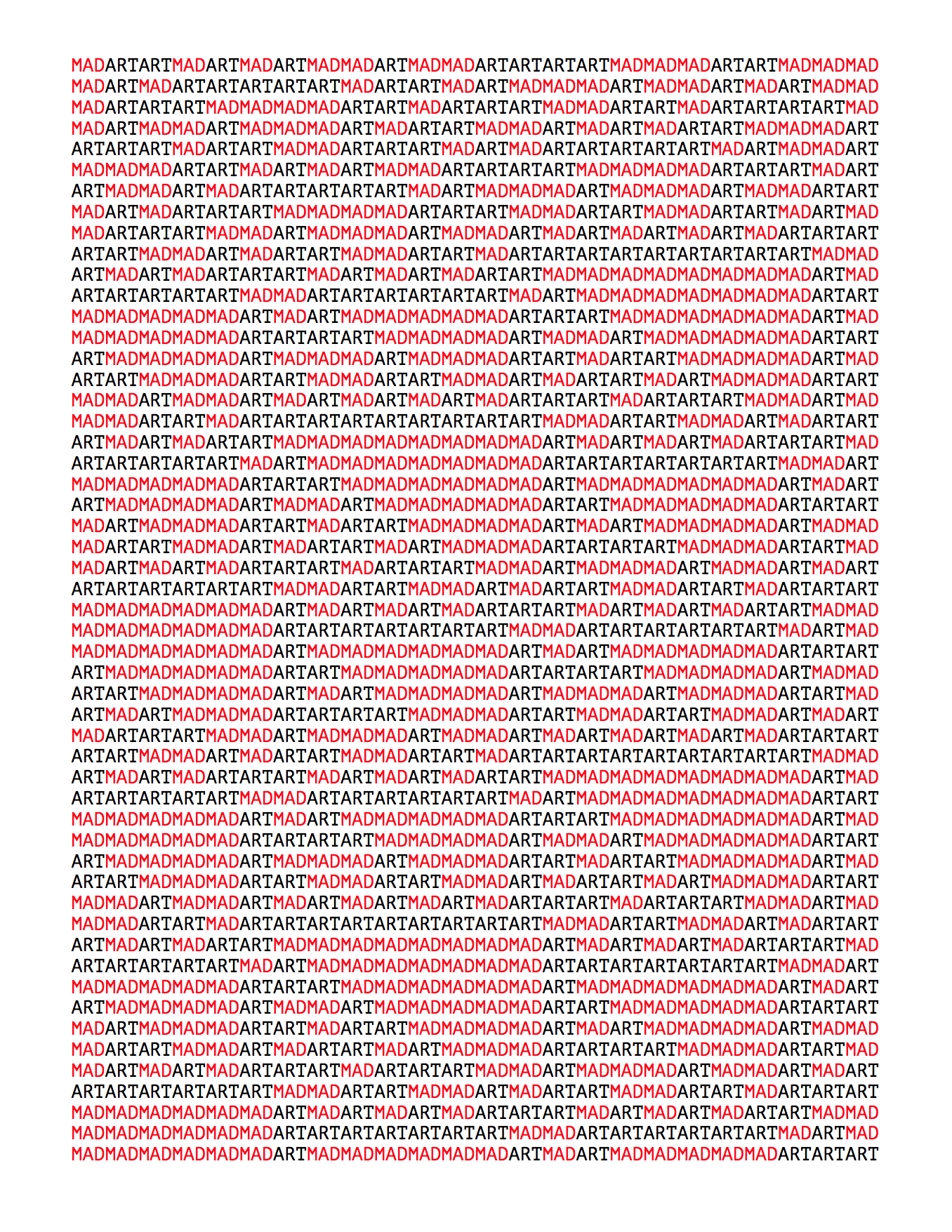

Emic Automata is set in a fixed width format with a monospaced font. Each cell is mapped to a pair of words of the same length and the text output gets generated much the same way as it would be graphically in pixels or grid squares.

In the generated PDF, I further reinforced this by rendering contrasting colours for each state. For three letter words, the underlying 2D structure generated by stacking generations of particular rule is visibly mirrored in the text. Longer words reveal a bit less about the underlying structure directly, but they still result in output that’s weird and interesting enough to be satisfying.

Sampled sentences

Thematic Automata works in a similar way, but maps each binary state to themes that are randomly selected from a list of common ideas:

- loneliness

- deception

- courage

- love

- freedom

- fear

- discovery

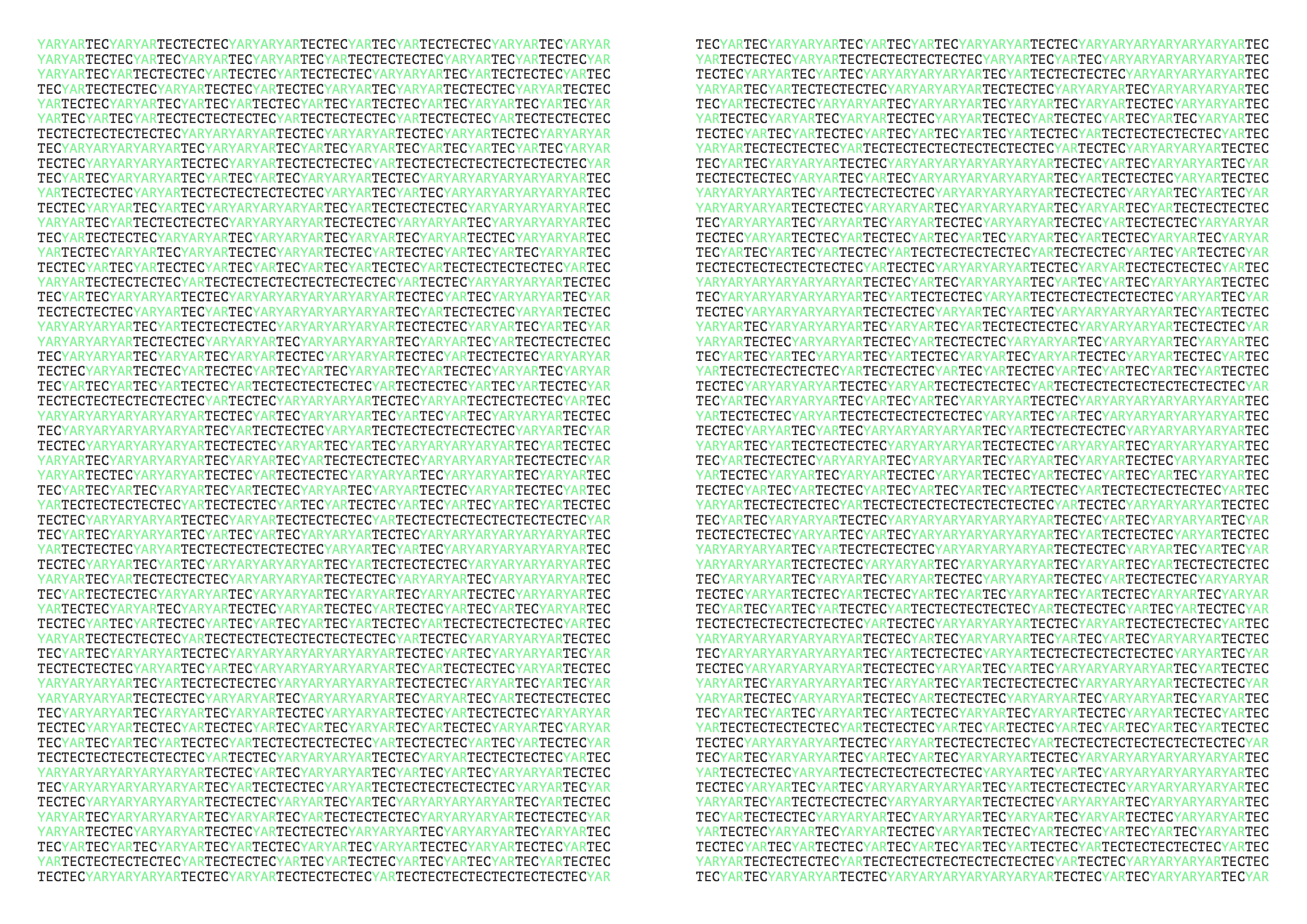

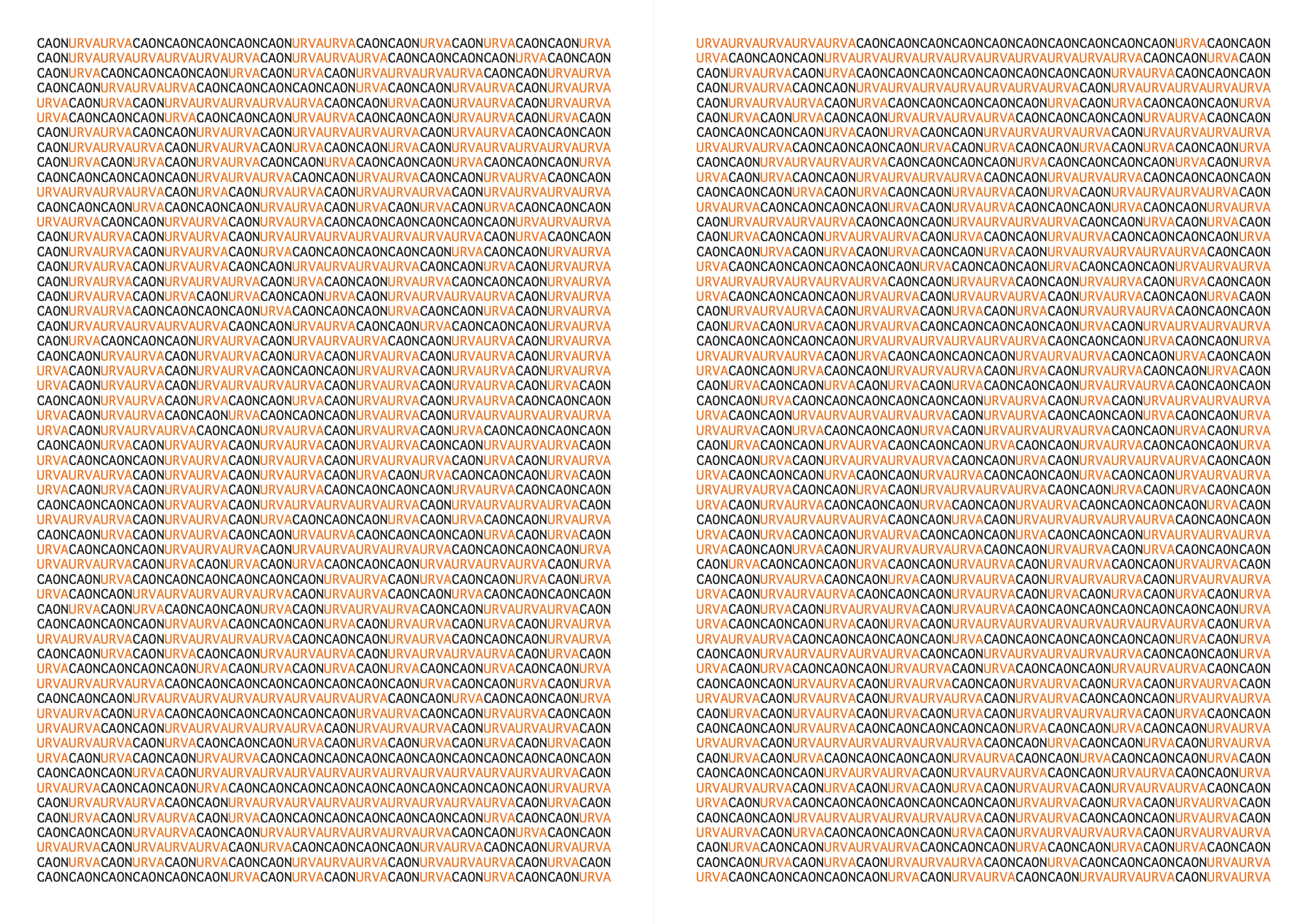

Instead of visually mapping the text output to fixed width lines, each cell produces a randomly generated sentence based on its assigned theme. These sentences are grouped into paragraphs based on the number of consecutive cells of the same state. Each paragraph thus flips from one theme to the next depending on the pattern generated by the automaton. Each generation of the automaton becomes a chapter.

Sentences are generated using word frequency n-grams chained from a corpus of sentences sourced from public domain fiction on Project Gutenberg. Setting this up gave me an opportunity to play with all sorts of wonderful juxtapositions, such as blending Katherine Mansfield and Phillip K Dick.

Each theme has a number of registered synonyms which are used to select a subset of potential sentences from the broader corpus. This approach is fairly brute force and inexact. It relies on the dubious assumption that a matching word in a sentence means that sentence meaningfully relates to the theme. The diversity or uniformity of the source sentences thus has a massive impact on the quality and coherence of the resulting output.

The goal here isn’t to write a literary masterpiece or even conform to basic expectations of coherent prose, it’s to create a particular aesthetic effect of two ideas repeating, contrasting and bouncing off one another. The effect is sometimes striking.

Covers and frontmatter

This year, I went a step beyond what I’ve done previously and rendered output directly to PDF. I’d never worked with dynamically generated PDFs before and didn’t really know much about the conventions of the format, so most of this work ended up being trial and error. Thankfully, the PDF library I was using took care of most of the heavy lifting and annoyances here.

To put covers and title pages together, I set up the generator to re-seed the configured automaton and used it to generate PNG images of the underlying rule for each book. This turned out to be quite helpful for testing as it gave me a visual reference point to check against the generated text.

Scratching the surface

While I was happy with these results, I haven’t fully made up my mind about this as a more general approach to generative writing. There is a lot more that I want to do with cellular automata in future, but I’m undecided on their efficacy for creating writing machines.

Perhaps there isn’t too much more that can be done coherently with this technique. Or perhaps I haven’t explored this area deeply enough and there are further possibilities with narrative and structural representation that I haven’t understood yet.

Please get in touch and let me know if you think of any new possibilities with this, or adapt the code for a different purpose.